Seven technologies to watch in 2023

From protein sequencing to electron microscopy, and from archaeology to astronomy, here are seven technologies that are likely to shake up science in the year ahead.

Single-molecule protein sequencing

The proteome represents the complete set of proteins made by a cell or organism, and can be deeply informative about health and disease, but it remains challenging to characterize.

Proteins are assembled from a larger alphabet of building blocks relative to nucleic acids, with roughly 20 naturally occurring amino acids (compared with the four nucleotides that form molecules such as DNA and messenger RNA); this results in much greater chemical diversity. Some are present in the cell as just a few molecules — and, unlike nucleic acids, proteins cannot be amplified, meaning protein-analysis methods must work with whatever material is available.

Most proteomic analyses use mass spectrometry, a technique that profiles mixtures of proteins on the basis of their mass and charge. These profiles can quantify thousands of proteins simultaneously, but the molecules detected cannot always be identified unambiguously, and low-abundance proteins in a mixture are often overlooked. Now, single-molecule technologies that can sequence many, if not all, of the proteins in a sample could be on the horizon — many of them analogous to the techniques used for DNA.

Edward Marcotte, a biochemist at the University of Texas at Austin, is pursuing one such approach, known as fluorosequencing1. Marcotte’s technique, reported in 2018, is based on a stepwise chemical process in which individual amino acids are fluorescently labelled and then sheared off one by one from the end of a surface-coupled protein as a camera captures the resulting fluorescent signal. “We could label the proteins with different fluorescent dyes and then watch molecule by molecule as we cut them away,” Marcotte explains. Last year, researchers at Quantum-Si, a biotechnology firm in Guilford, Connecticut, described an alternative to fluorosequencing that uses fluorescently labelled ‘binder’ proteins to recognize specific sequences of amino acids (or polypeptides) at the ends of proteins2.

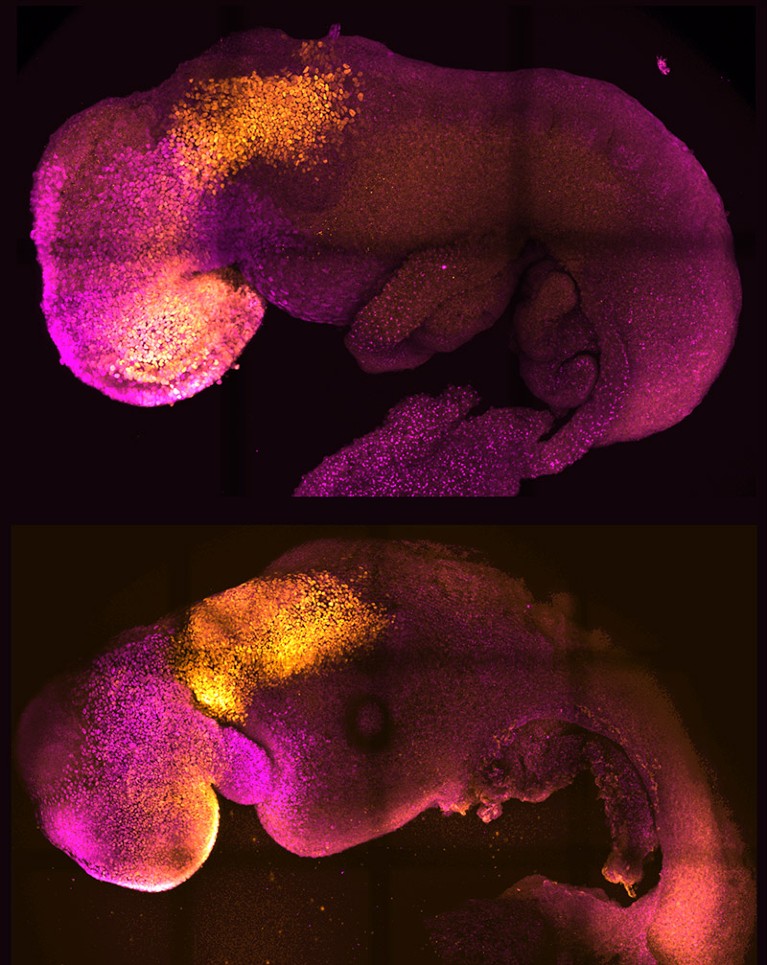

Researchers can now make synthetic embryos in the laboratory (bottom) that resemble natural, eight-day-old embryos (top).Credit: Magdalena Zernicka-Goetz Laboratory

Other researchers are developing techniques that emulate nanopore-based DNA sequencing, profiling polypeptides on the basis of the changes they induce in an electric current as they pass through tiny channels. Biophysicist Cees Dekker at Delft University of Technology in the Netherlands and his colleagues demonstrated one such approach in 2021 using nanopores made of protein, and were able to discriminate between individual amino acids in a polypeptide passing through the pore3. And at the Technion — Israel Institute of Technology in Haifa, biomedical engineer Amit Meller’s team is investigating solid-state nanopore devices manufactured from silicon-based materials that could enable high-throughput analyses of many individual protein molecules at once. “You might be able to look at maybe tens of thousands or even millions of nanopores simultaneously,” he says.

Although single-molecule protein sequencing is only a proof of concept at present, commercialization is coming fast. Quantum-Si has announced plans to ship first-generation instruments this year, for example, and Meller notes that a protein-sequencing conference in Delft in November 2022 featured a discussion panel dedicated to start-ups in this space. “It reminds me a lot of the early days before next-generation DNA sequencing,” he says.

Marcotte, who co-founded the protein- sequencing company Erisyon in Austin, Texas, is bullish. “It’s not really a question of whether it will work,” he says, “but how soon it will be in people’s hands.”

James Webb Space Telescope

Astronomers began last year on the edges of their collective seats. After a design and construction process lasting more than two decades, NASA — in collaboration with the European and Canadian space agencies — successfully launched the James Webb Space Telescope (JWST) into orbit on 25 December 2021. The world had to stand by for nearly seven months as the instrument unfolded and oriented itself for its first round of observations.

It was worth the wait. Matt Mountain, an astronomer at the Space Telescope Science Institute in Baltimore, Maryland, who is a telescope scientist for JWST, says the initial images exceeded his lofty expectations. “There’s actually no empty sky — it’s just galaxies everywhere,” he says. “Theoretically, we knew it, but to see it, the emotional impact is very different.”

JWST was designed to pick up where the Hubble Space Telescope left off. Hubble generated stunning views of the Universe, but had blind spots: ancient stars and galaxies with light signatures in the infrared range were essentially invisible to it. Rectifying that required an instrument with the sensitivity to detect incredibly faint infrared signals originating billions of light years away.

The final design for JWST incorporates an array of 18 perfectly smooth beryllium mirrors that, when fully unfolded, has a diameter of 6.5 metres. So precisely engineered are those mirrors, says Mountain, that “if you stretched a segment out over the United States, no bump could be more than a couple of inches [high].” These are coupled with state-of-the-art near- and mid-infrared detectors.

NatureTech

That design allows JWST to fill in Hubble’s gaps, including capturing signatures from a 13.5-billion-year-old galaxy that produced some of the first atoms of oxygen and neon in the Universe. The telescope has also yielded some surprises; for instance, being able to measure the atmospheric composition of certain classes of exoplanet.

Researchers around the world are queueing up for observation time. Mikako Matsuura, an astrophysicist at Cardiff University, UK, is running two studies with JWST, looking at the creation and destruction of the cosmic dust that can contribute to star and planet formation. “It’s a completely different order of sensitivity and sharpness” compared with the telescopes her group has used in the past, Matsuura says. “We have seen completely different phenomena ongoing inside these objects — it’s amazing.”

Volume electron microscopy

Electron microscopy (EM) is known for its outstanding resolution, but mostly at the surface level of samples. Going deeper requires carving a specimen into exceptionally thin slices, which for biologists are often insufficient for the task. Lucy Collinson, an electron microscopist at the Francis Crick Institute in London, explains that it can take 200 sections to cover the volume of just a single cell. “If you’re just getting one [section], you’re playing a game of statistics,” she says.

Now researchers are bringing EM resolution to 3D tissue samples encompassing many cubic millimetres.

Previously, reconstructing such volumes from 2D EM images — for example, to chart the neural connectivity of the brain — involved a painstaking process of sample preparation, imaging and computation to turn those images into a multi-image stack. The latest ‘volume EM’ techniques now drastically streamline this process.

Those techniques have various advantages and limitations. Serial block-face imaging, which uses a diamond-edged blade to shave off thin sequential layers of a resin-embedded sample as it is imaged, is relatively fast and can handle samples approaching one cubic millimetre in size. However, it offers poor depth resolution, meaning the resulting volume reconstruction will be comparatively fuzzy. Focused ion beam scanning electron microscopy (FIB-SEM) yields much thinner layers — and thus finer depth resolution — but is better suited to smaller-volume samples.

Collinson describes the rise of volume EM as a ‘quiet revolution’, with researchers highlighting the results of this approach rather than the techniques used to generate them. But this is changing. For example, in 2021, researchers working on the Cell Organelle Segmentation in Electron Microscopy (COSEM) initiative at Janelia Research Campus in Ashburn, Virginia, published a pair of papers in Nature highlighting substantial progress in mapping the cellular interior4,5. “It’s a very impressive proof of principle,” says Collinson.

Seven technologies to watch in 2022

The COSEM initiative uses sophisticated, bespoke FIB-SEM microscopes that increase the volume that can be imaged in a single experiment by roughly 200-fold, while preserving good spatial resolution. Using a bank of these machines in conjunction with deep-learning algorithms, the team was able to define various organelles and other subcellular structures in the full 3D volume of a wide range of cell types.

The sample-preparation methods are laborious and difficult to master, and the resulting data sets are massive. But the effort is worthwhile: Collinson is already seeing insights in infectious-disease research and cancer biology. She is now working with colleagues to explore the feasibility of reconstructing the entire mouse brain at high resolution — an effort she predicts will take more than a decade of work, cost billions of dollars and produce half a billion gigabytes of data. “It’s probably on the same order of magnitude as the effort to map the first human genome,” she says.

CRISPR anywhere

The genome-editing tool CRISPR–Cas9 has justifiably earned a reputation as the go-to method for introducing defined changes at targeted sites throughout the genome, driving breakthroughs in gene therapy, disease modelling and other areas of research. But there are limits as to where it can be used. Now, researchers are finding ways to circumvent those limitations.

CRISPR editing is coordinated by a short guide RNA, which directs an associated Cas nuclease enzyme to its target genomic sequence. But this enzyme also requires a nearby sequence called a protospacer adjacent motif (PAM); without one, editing is likely to fail.

At the Massachusetts General Hospital in Boston, genome engineer Benjamin Kleinstiver has used protein engineering to create ‘near-PAMless’ Cas variants of the commonly used Cas9 enzyme from the bacterium Streptococcus pyogenes. One Cas variant requires a PAM of just three consecutive nucleotide bases with an A or G nucleotide in the middle position6. “These enzymes now read practically the entire genome, whereas conventional CRISPR enzymes read anywhere between 1% and 10% of the genome,” says Kleinstiver.

Such less-stringent PAM requirements increase the chances of ‘off-target’ edits, but further engineering can improve their specificity. As an alternative approach, Kleinstiver’s team is engineering and testing large numbers of Cas9 variants that each exhibit high specificity for distinct PAM sequences.

There are also many naturally occurring Cas variants that remain to be discovered. In nature, the CRISPR–Cas9 system is a bacterial defence mechanism against viral infection, and different microorganisms have evolved various enzymes with distinct PAM preferences. Virologist Anna Cereseto and microbiome researcher Nicola Segata at the University of Trento in Italy have combed through more than one million microbial genomes to identify and characterize a diverse set of Cas9 variants, which they estimate could collectively target more than 98% of known disease-causing mutations in humans7.

Only a handful of these will work in mammalian cells, however. “Our idea is to test many and see what are the determinants that make those enzymes work properly,” says Cereseto. Between the insights gleaned from these natural enzyme pools and high-throughput protein-engineering efforts, Kleinstiver says, “I think we’ll end with a pretty complete toolbox of editors that allow us to edit any base that we want”.

High-precision radiocarbon dating

Last year, archaeologists took advantage of advances in radiocarbon dating to home in on the precise year — and even the season — in which Viking explorers first arrived in the Americas. Working with pieces of felled timber unearthed in a settlement on the northern shore of Newfoundland, Canada, a team led by isotope-analysis expert Michael Dee at the University of Groningen in the Netherlands and his postdoc Margot Kuitems determined that the tree was likely to have been cut down in the year 1021, probably in the spring8.

Scientists have been using radiocarbon dating of organic artefacts since the 1940s to narrow down the dates of historical events. They do so by measuring traces of the isotope carbon-14, which is formed as a result of the interaction of cosmic rays with Earth’s atmosphere and which decays slowly over millennia. But the technique is usually precise only to within a couple of decades.

Precise radiocarbon dating of timber at L’Anse aux Meadows in Newfoundland, Canada, revealed that Vikings cut down a tree at the site in 1021.All Canada Photos/Alamy

Things changed in 2012, when researchers led by physicist Fusa Miyake at Nagoya University in Japan showed9 they could date a distinctive spike in carbon-14 levels in the rings of a Japanese cedar tree to ad 774–5. Subsequent research10 not only confirmed that this spike was present in wood samples around the world from this period, but also identified at least five other such spikes dating as far back as 7176 bc. Researchers have linked these spikes to solar-storm activity, but this hypothesis is still being explored.

Whatever their cause, these ‘Miyake events’ allow researchers to put a precise pin in the year in which wooden artefacts were created, by detecting a specific Miyake event and then counting the rings that formed since then. Researchers can even establish the season in which a tree was harvested, on the basis of the thickness of the outermost ring, Kuitems says.

Archaeologists are now applying this approach to Neolithic settlements and sites of volcanic eruption, and Dee hopes to use it to study the Mayan empire in Mesoamerica. In the next decade or so, Dee is optimistic that “we will have really absolute records for a lot of these ancient civilizations to the exact year, and we’ll be able to talk about their historical development … at a really fine scale”.

As for Miyake, her search for historical yardsticks continues. “We are now searching for other carbon-14 spikes comparable to the 774–5 event for the past 10,000 years,” she says.

Single-cell metabolomics

Metabolomics — the study of the lipids, carbohydrates and other small molecules that drive the cell — was originally a set of methods for characterizing metabolites in a population of cells or tissues, but is now shifting to the single-cell level. Scientists could use such cellular-level data to untangle the functional complexity in vast populations of seemingly identical cells. But the transition poses daunting challenges.

The metabolome encompasses vast numbers of molecules with diverse chemical properties. Some of these are highly ephemeral, with subsecond turnover rates, says Theodore Alexandrov, a metabolomics researcher at the European Molecular Biology Laboratory in Heidelberg, Germany. And they can be hard to detect: whereas single-cell RNA sequencing can capture close to half of all the RNA molecules produced in a cell or organism (the transcriptome), most metabolic analyses cover only a tiny fraction of a cell’s metabolites. This missing information could include crucial biological insights.

“The metabolome is actually the active part of the cell,” says Jonathan Sweedler, an analytical chemist at the University of Illinois at Urbana-Champaign. “When you have a disease, if you want to know the cell state, you really want to look at the metabolites.”

Many metabolomics labs work with dissociated cells, which they trap in capillaries and analyse individually using mass spectrometry. By contrast, ‘imaging mass spectrometry’ methods capture spatial information about how cellular metabolite production varies at different sites in a sample. For instance, researchers can use a technique called matrix-assisted laser desorption/ionization (MALDI), in which a laser beam sweeps across a specially treated tissue slice, releasing metabolites for subsequent analysis by mass spectrometry. This also captures the spatial coordinates from which the metabolites originated in the sample.

Seven technologies to watch in 2021

In theory, both approaches can quantify hundreds of compounds in thousands of cells, but achieving that typically requires top-of-the-line, customized hardware costing in the million-dollar range, says Sweedler.

Now, researchers are democratizing the technology. In 2021, Alexandrov’s group described SpaceM, an open-source software tool that uses light microscopy imaging data to enable spatial metabolomic profiling of cultured cells using a standard commercial mass spectrometer11. “We kind of did the heavy lifting on the data-analysis part,” he says.

Alexandrov’s team has used SpaceM to profile hundreds of metabolites from tens of thousands of human and mouse cells, turning to standard single-cell transcriptomic methods to classify those cells into groups. Alexandrov says he is especially enthusiastic about this latter aspect and the idea of assembling ‘metabolomic atlases’ — analogous to those developed for transcriptomics — to accelerate progress in the field. “This is definitely the frontier, and will be a big enabler,” he says.

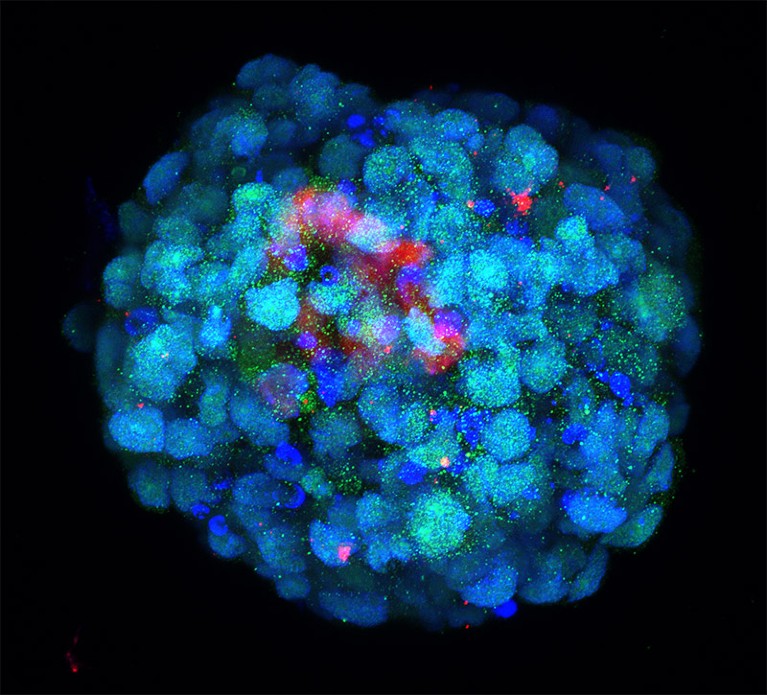

In vitro embryo models

The journey from fertilized ovum to fully formed embryo has been mapped in detail at the cellular level for mice and humans. But the molecular machinery driving the early stages of this process remains poorly understood. Now a flurry of activity in ‘embryoid’ models is helping to fill these knowledge gaps, giving researchers a clearer view of the vital early events that can determine the success or failure of fetal development.

Some of the most sophisticated models come from the lab of Magdalena Zernicka-Goetz, a developmental biologist at the California Institute of Technology in Pasadena and the University of Cambridge, UK. In 2022, she and her team demonstrated that they could generate implantation-stage mouse embryos entirely from embryonic stem (ES) cells12,13.

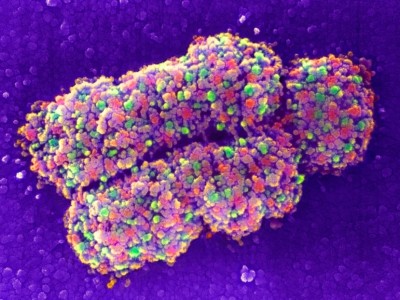

An embryoid made using cells engineered to resemble the eight-cell stage of an embryo.Credit: M.A Mazid et al./Nature

Like all pluripotent stem cells, ES cells can form any cell or tissue type — but they require close interaction with two types of extra-embryonic cell to complete normal embryonic development. The Zernicka-Goetz team learnt how to coax ES cells into forming these extra-embryonic cells, and showed that these could be co-cultured with ES cells to yield embryo models that mature to stages that were previously unattainable in vitro. “It’s as faithful as you can imagine an embryonic model,” says Zernicka-Goetz. “It develops a head and heart — and it’s beating.” Her team was able to use this model to reveal how alterations in individual genes can derail normal embryonic development12.

At the Guangzhou Institutes of Biomedicine and Health, Chinese Academy of Sciences, stem-cell biologist Miguel Esteban and colleagues are taking a different tack: reprogramming human stem cells to model the earliest stages of development.

“We started with the idea that actually it might even be possible to make zygotes,” Esteban says. The team didn’t quite achieve that, but they did identify a culture strategy that pushed these stem cells back to something resembling eight-cell human embryos14. This is a crucial developmental milestone, associated with a massive shift in gene expression that ultimately gives rise to distinct embryonic and extra-embryonic cell lineages.

Although imperfect, Esteban’s model exhibits key features of cells in natural eight-cell embryos, and has highlighted important differences between how human and mouse embryos initiate the transition to the eight-cell stage. “We saw that a transcription factor that is not even expressed in the mouse regulates the whole conversion,” says Esteban.

Collectively, these models can help researchers to map how just a few cells give rise to the staggering complexity of the vertebrate body.

Research on human embryos is restricted beyond 14 days of development in many countries, but there’s plenty that researchers can do within those constraints. Non-human primate models offer one possible alternative, Esteban says, and Zernicka-Goetz says that her mouse-embryo strategy can also generate human embryos that develop as far as day 12. “We still have lots of questions to ask within that stage that we are comfortable studying,” she says.